Hahahaha. it’s Friday.

A professor in University of Cologne (Germany) submits a text to Nature where he admits to not do his work and use AI instead. Then, data is lost due to own incompetence.

I’m a lazy idiot who makes a dumb mistake…what to do? Ahhhhh, I must ensure that the whole world learns about it ![]()

Within a couple of years of ChatGPT coming out, I had come to rely on the artificial-intelligence tool, for my work as a professor of plant sciences at the University of Cologne in Germany. Having signed up for OpenAI’s subscription plan, ChatGPT Plus, I used it as an assistant every day — to write e-mails, draft course descriptions, structure grant applications, revise publications, prepare lectures, create exams and analyse student responses, and even as an interactive tool as part of my teaching.

It was fast and flexible, and I found it reliable in a specific sense: it was always available, remembered the context of ongoing conversations and allowed me to retrieve and refine previous drafts. I was well aware that large language models such as those that power ChatGPT can produce seemingly confident but sometimes incorrect statements, so I never equated its reliability with factual accuracy, but instead relied on the continuity and apparent stability of the workspace.

But in August, I temporarily disabled the ‘data consent’ option because I wanted to see whether I would still have access to all of the model’s functions if I did not provide OpenAI with my data. At that moment, all of my chats were permanently deleted and the project folders were emptied — two years of carefully structured academic work disappeared. No warning appeared. There was no undo option. Just a blank page. Fortunately, I had saved partial copies of some conversations and materials, but large parts of my work were lost forever.

PS. if I remember well, putting AI and grant applications in the same paragraph is a big no-no (potential fraud). Hope a competent lawyer checked the text first, otherwise pain is coming.

One AI getting misinformation from another AI

It’s going to get so much worse when answers to questions are going to be from AI engines getting their info from AI regurgitated a million times.

It’s going to be the computer version of Chinese whispers.

Chinese firm zhipu ai is limiting access as demand now exceeds its server capacity.

when I read that, I thought on the technique that I use to tame my beeswarms: keep them in a dark box and don’t feed them until they calm down.

Now, on a more serious note - I don’t have facebook, neither instagram, nor tiktok. But I have a linkedin account – and I noticed these swarms of executivers / leaders / ‘free thinkers’ and others (whom I don’t know, nor I am linked to) appearing on my feed with …interesting opinions about the beauties of the new US state.

Time to get rid also of LinkedIn (which has become in the last years just a stiffer upper lip version of Facebook full of people…full of themselves!

AI Grok. Sounds like something one shits out of the arse.

It’s an insult to a great science fiction author.

Social media for AIs

Good Lord!!

Foresee at least another degree Fahrenheit being added to the global climate.

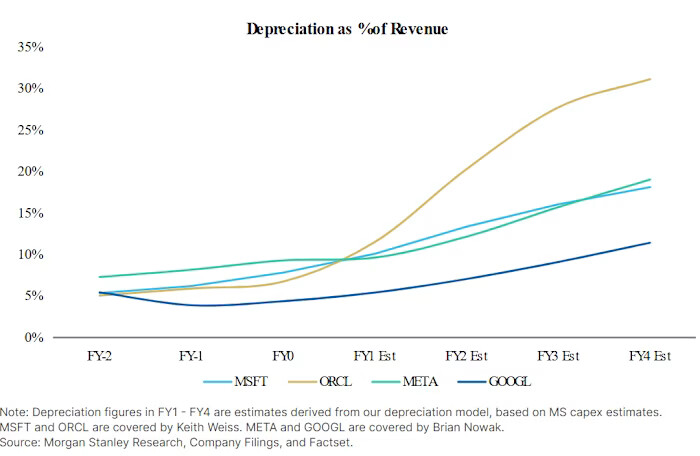

Super boring stuff: depreciation. I was reading something about data center depreciation on the FT and it made me remember the narrative from some years ago.

Indeed, when I was in the university everyone talked about how great were the asset-light software companies. So-called opinion leaders praised Apple for not even assembling the phones, the gold is in design, software development and management of intellectual property. Fast forward to 2025, this is a good explanation of what is happening:

For over a decade, the Magnificent 7 (M7)— Apple, Microsoft, Amazon, Alphabet, Meta, Nvidia, and Tesla — were the embodiment of the asset-light revolution. They scaled globally without building factories or owning infrastructure on a massive scale. Their value came from intangible assets — software, networks, data, and brand power — not heavy machinery or fixed capital.

But this model is now being left behind. The Magnificent 7 are shifting from being software-first to infrastructure-first — from the digital lightness that once made them unstoppable to a new form of industrial heft. The reason is clear: the AI revolution has changed the rules of competition. Artificial intelligence, once expected to be the ultimate intangible technology, has become the most capital-intensive race in modern history. Collectively, the Magnificent 7 are on track to spend nearly $400 billion a year on AI-related capital expenditures. Globally, AI investment is expected to exceed $5 trillionwithin five years.

A decade ago, these firms spent around 4% of their revenue on capital expenditures. Today, it’s roughly 15%, and for some — such as Meta, Microsoft, and Alphabet — projected to reach 30% or more. These are not the metrics of software companies anymore; they are the ratios of utilities and telecom operators.

So, the balance sheet of ex-asset-light companies is becoming like the one of a telecom or energy companies. Costly and depreciating infrastructure is now there. The Magnificent 7 are becoming slow dinosaur with high capex and low net margins. Apparently, they have no choice, they become a slow dinosaur or risk extinction if AI ever delivers revenue.

As an ant in a world of horses, I’m a bit concerned that business leaders that have all the experience in software companies are now leading something like an oil company. The chances of something going wrong because lack of experience and awareness are higher.

Back to the FT nerds, curious things are showing up balance sheets of the M7. The hardware depreciation guidance becomes longer and longer. In year 2020, a data center was depreciated in 4 years. In year 2025, a data center lasts 6 years. And there are more tricks in the bag, depreciation only starts when the data center becomes operational, but the capex of building it goes to the asset column immediately. As consumers we complain about inflated prices of graphics cards and RAM, how will depreciation hit an asset bought at inflated price?. This chart captures the issue, depreciation is starting to eat up revenue and will eat up even more revenue in 2026:

So, the chart says that depreciation will hit in 2-3 years. The issue is that the chart above may already look uglier without accounting tricks. What if the assets are simply not there?

At the end, the only practical use of AI apart from getting some code lines of python and C++ will be to kill our retirement savings.

Just used AI to identify this car:

In my mind, my father at around age four is in the driving seat and a little research suggested that a LHD model T circa 1930 is quite likely, maybe an import from Canada or assembled in NZ.

The real winners will be electricians, plumbers and construction workers.

capex ![]() depreciation

depreciation ![]()

AI isn’t just about software and code — it’s also about the real-world systems that make it work. Nvidia’s CEO is right: the AI revolution is not just digital; it’s physical.

Elon’s robots will take over plumbing

LOL!

I found this article quite informational and thought provoking.

While shiers are still do their shting … Freedom is not an excuse to sh*t on others …

https://www.japantimes.co.jp/business/2026/02/03/tech/grok-sexual-images-no-consent/

Get ready for AI advertising!

According to this Superbowl ad

But not with Claude or Lumo and Euria

And here is another one: https://youtu.be/FBSam25u8O4

Yea. Thats the hot air from all the bla-bla.

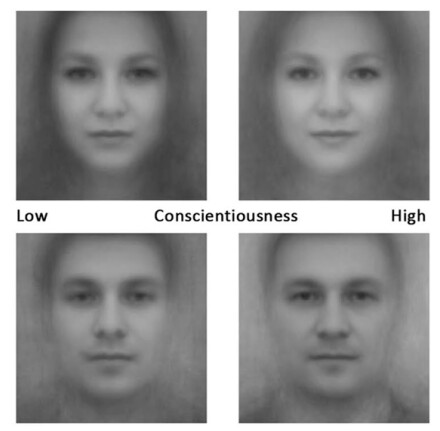

Phrenology is back!!!

#AI Personality Extraction from Faces: Labor Market Implications

Human capital—encompassing cognitive skills and personality traits—is critical for

labor market success, yet the personality component remains difficult to measure at

scale. Leveraging advances in artificial intelligence and comprehensive LinkedIn mi-

crodata, we extract the Big 5 personality traits from facial images of 96,000 MBA

graduates, and demonstrate that this novel “Photo Big 5” predicts school rank, com-

pensation, job seniority, industry choice, job transitions, and career advancement. Us-

ing administrative records from top-tier MBA programs, we find that the Photo Big 5

exhibits only modest correlations with cognitive measures like GPA and standardized

test scores, yet offers comparable incremental predictive power for labor outcomes. Un-

like traditional survey-based personality measures, the Photo Big 5 is readily accessible

and potentially less susceptible to manipulation, making it suitable for wide adoption

in academic research and hiring processes. However, its use in labor market screening

raises ethical concerns regarding statistical discrimination and individual autonomy.

This is great, conscientiousness is something you can measure in people’s faces:

A bit sad that the bright code developers aim to develop scientific racism instead of finding a faster way to solve partial different equations with computers ![]()

More seriously, the sad thing here is 98% of efforts go to identifying talent, 1.5% to developing talent, and 0.5% to actually using the talent within a team.